When Cloud Migration Exposes Discrepancies

A Sensible Architecture Decision

A global investment bank had spent the better part of two years modernising its infrastructure. Like most institutions of its size, it was balancing two competing realities: the need to move quickly into cloud environments to support new trading initiatives, and the obligation to maintain the kind of control and auditability that regulators expect from systemically important firms.

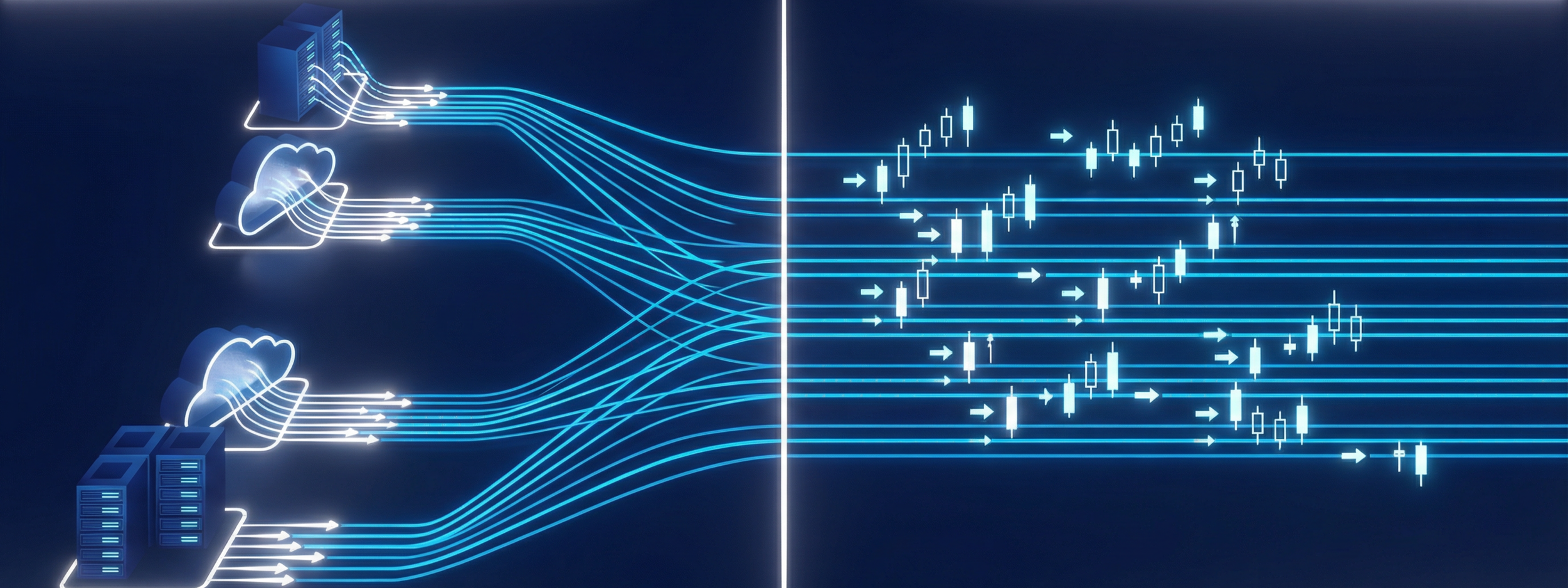

The architecture they landed on was pragmatic. Core trading systems would remain in colocated data centres, where latency and determinism could be tightly managed. New services, regional expansion, and analytics workloads would increasingly run in AWS and Azure. From a systems perspective, it was a reasonable compromise.

Time, however, was treated as an implementation detail rather than a design decision.

An Assumption That Held Until It Didn’t

In their on-prem environments, timing had never really been questioned. It came from dedicated appliances, ultimately tied back to national UTC sources. The setup was familiar, well understood, and had passed years of internal and external scrutiny. When workloads moved into the cloud, the assumption was that time would simply come from the cloud provider in the same way compute and storage did.

For a while, that assumption held.

The First Signs of Drift

It was only during a broader internal review, triggered by a compliance workstream, that the cracks began to show. As part of that effort, the bank attempted to validate timestamp consistency across systems spanning both on-prem and cloud environments.

What they found was not catastrophic, but it was uncomfortable. Offsets in the tens of milliseconds appeared between systems that were expected to agree. In isolation, those differences seemed minor. In aggregate, they created ambiguity.

Trades executed in one environment did not line up cleanly with the sequence of events recorded in another. Logs could not always be reconciled without interpretation. The system still functioned, but it no longer told a single, coherent story about what had happened and when.

When It Becomes a Regulatory Problem

That ambiguity became far more serious once it was viewed through a regulatory lens.

Under MiFID II and similar regimes, the requirement is not simply that systems are roughly aligned. Firms must be able to demonstrate that their timestamps are traceable to UTC, within defined tolerances, and that this alignment can be evidenced during an audit.

When the team began to map their architecture against those requirements, it became clear that parts of their environment, particularly in the cloud, could not meet that standard with confidence.

Why the Existing Model Broke Down

The issue was not that the cloud provider’s time service was wrong. It was that it could not be proven.

There was no clear chain of traceability back to UTC that would satisfy an auditor. Public NTP introduced variability that could not be fully characterised. And perhaps most importantly, there was no single, authoritative time reference shared consistently across all environments.

What had started as a routine infrastructure evolution had quietly introduced a new category of risk.

Reframing Time as Infrastructure

Addressing it required a shift in how time was understood within the architecture.

Rather than treating it as a local service provided independently within each environment, the bank began to treat time as a shared, critical dependency. Something that needed to be designed, controlled, and evidenced end-to-end.

In practice, that meant establishing a common source of time derived directly from national UTC authorities, distributing it through controlled and measurable network paths, and ensuring that systems in both cloud and on-prem environments synchronised against that same reference. It also meant introducing continuous measurement and reporting so that alignment was not just achieved, but demonstrable at any point in time.

What Changed

Once implemented, the change did not alter the behaviour of any single application in a visible way. There was no new feature, no performance breakthrough that traders could point to.

What changed instead was the consistency of the underlying system.

Events are lined up without reconciliation. Logs told the same story regardless of where they were generated. Compliance teams were able to demonstrate traceability with evidence rather than assumption. The cloud environment, which had previously been an outlier, became part of a unified and auditable whole.

The Broader Pattern

This is a pattern that is becoming increasingly common. The move to the cloud does not typically introduce obvious failures. Systems continue to run, trades continue to execute, and metrics often look acceptable at a glance.

What changes is the level of certainty. Small discrepancies accumulate in ways that only become visible under scrutiny, particularly when regulatory requirements demand precision and proof.

By the time those discrepancies are discovered, the problem is no longer purely technical. It sits at the intersection of infrastructure, risk, and compliance, and requires a more deliberate approach to something that was previously taken for granted.

Time, in that sense, stops being an invisible utility and becomes part of the system that must be explicitly designed and defended.